即时新闻

Enter your email address below and subscribe to Deepseek AI newsletter

Deepseek AI

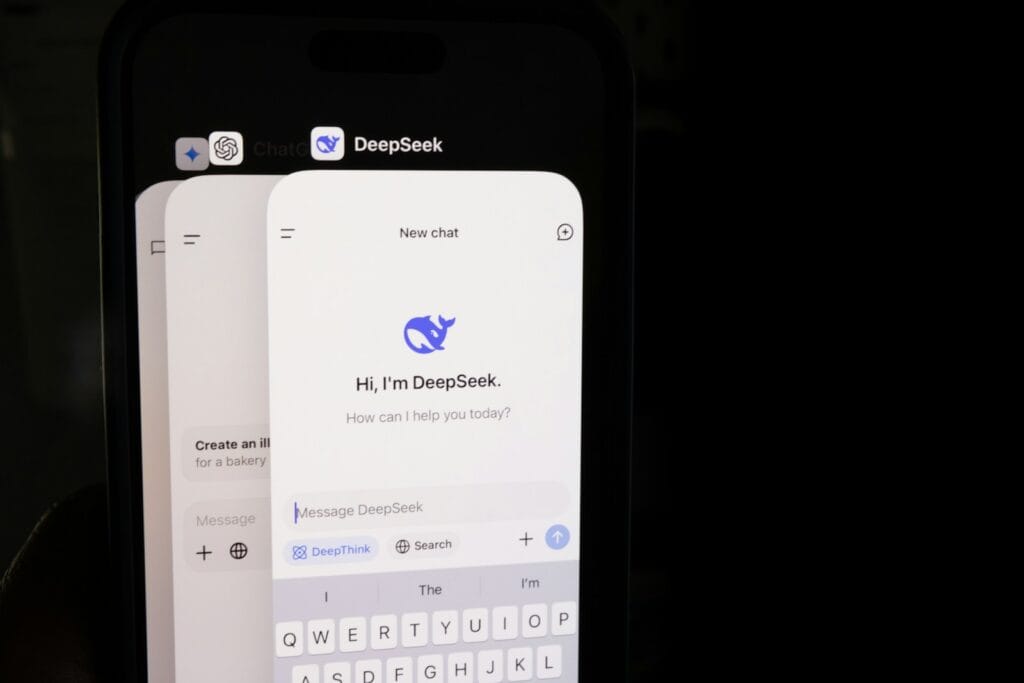

A detailed case study showing how a company migrated from Claude to DeepSeek, improving performance, reducing costs, and optimizing AI workflows.

Switching AI models is not like switching coffee brands. You don’t just swap it out and hope nobody notices. Entire pipelines break, outputs change tone, and suddenly your “stable” system behaves like it just discovered chaos.

This case study explores how a FinTech-style company migrated from Claude to 深度搜索, why they did it, what broke along the way, and what actually improved.

Claude was initially selected for three main reasons:

For early-stage systems, Claude worked well in:

But as the company scaled, cracks began to show.

As API usage increased, costs scaled linearly—and painfully.

Real-time systems began to suffer:

Fine-tuning and control over outputs were limited compared to newer models.

Claude performed well in general reasoning, but many tasks were repetitive and structured—making it inefficient.

The company evaluated multiple models before selecting DeepSeek.

DeepSeek was particularly effective for:

A direct switch would have been reckless. Instead, the company followed a phased migration.

Tasks were categorized:

Claude-style prompts did not translate directly.

DeepSeek required:

Differences in formatting required post-processing adjustments.

DeepSeek significantly improved:

The system could now handle:

Different models produced slightly different answers.

Existing prompts had to be redesigned.

Engineers needed time to understand new model behavior.

After full migration:

Migrating from Claude to DeepSeek wasn’t just a cost-saving decision—it was a strategic shift toward efficiency and scalability.

For companies dealing with high-volume, data-heavy workflows, DeepSeek proved to be a strong alternative.

To reduce costs and improve performance in structured tasks.

It depends on the use case—DeepSeek excels in structured and data-heavy workflows.

Typically weeks to months depending on system complexity.

Output differences, integration challenges, and retraining costs.

Yes, hybrid approaches are common for optimal performance.