Breaking News

Enter your email address below and subscribe to Deepseek AI newsletter

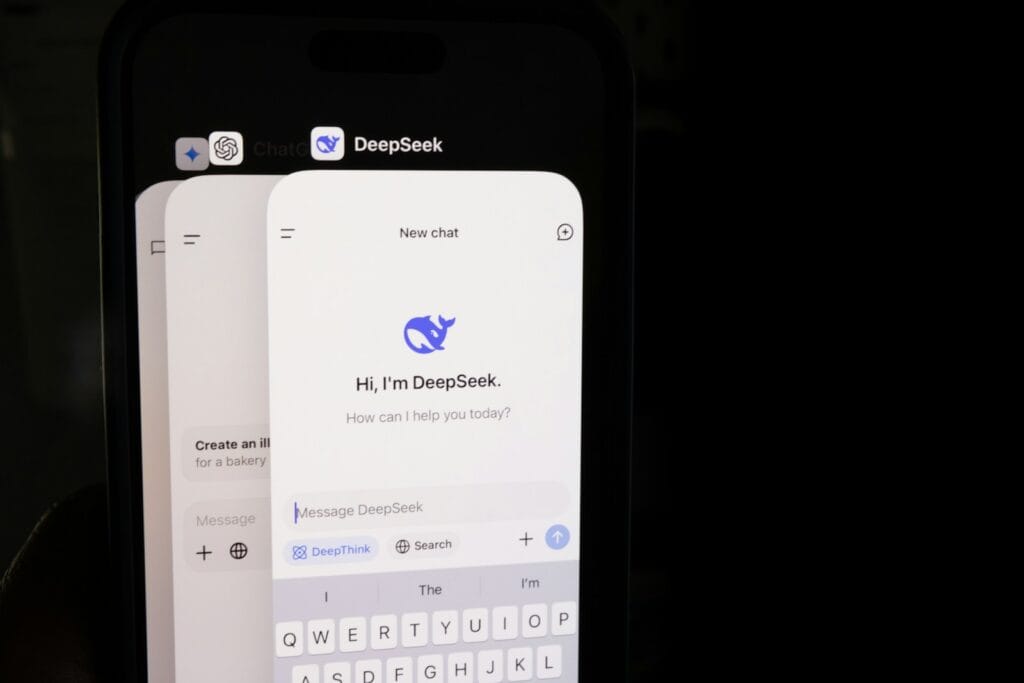

Deepseek AI

DeepSeek V3 is a powerful reasoning model, but it still has limitations such as hallucinations, context limits, and prompt sensitivity.

AI models have advanced significantly in recent years, enabling applications ranging from coding assistants to research analysis tools. However, even the most capable models have limitations that developers and organizations should understand before deploying them in production systems.

The DeepSeek V3, created by DeepSeek, offers strong reasoning capabilities, long-context processing, and support for complex prompts. Despite these strengths, the model still has technical constraints common to large language models.

Understanding these limitations helps teams design more reliable AI systems and avoid unrealistic expectations.

AI models are probabilistic systems that generate responses based on patterns in training data.

This means they can sometimes:

For organizations building AI-powered systems, understanding these risks is essential for responsible deployment.

Like many large language models, DeepSeek V3 may sometimes generate information that appears correct but is inaccurate.

These responses are often called AI hallucinations.

Examples include:

Because of this, AI-generated information should always be verified in critical applications.

Although DeepSeek V3 supports long-context processing, it still operates within a maximum context window.

This means:

Developers often solve this by summarizing earlier content or splitting tasks into smaller prompts.

DeepSeek V3 is designed for structured reasoning, but it can still make mistakes in multi-step logic.

For example:

Even advanced reasoning models may occasionally produce incorrect conclusions.

AI output quality depends heavily on prompt clarity.

Poor prompts can result in:

Clear instructions usually produce better results.

Example improvement:

Instead of asking:

“Explain this problem.”

Use:

“Explain the problem step by step and identify possible causes.”

Large language models are trained on large datasets but do not always have access to real-time information.

This means the model may struggle with:

External data sources are often used to supplement AI responses.

Advanced AI models require significant computational resources.

For developers using the DeepSeek API Platform, costs may increase depending on:

Organizations should monitor token usage carefully when deploying AI at scale.

AI models simulate understanding but do not actually comprehend information the way humans do.

They rely on statistical patterns rather than genuine reasoning or awareness.

As a result, they may sometimes produce convincing explanations that are logically flawed.

Developers can reduce many AI limitations by designing systems carefully.

Important outputs should be validated using external tools or human review.

Well-structured prompts improve reasoning accuracy.

Smaller prompts often produce more reliable results.

Retrieval systems can provide current information to supplement model responses.

Despite its limitations, DeepSeek V3 performs well in many scenarios.

These include:

When used appropriately, the model can significantly improve productivity.

DeepSeek V3 offers strong reasoning capabilities and long-context processing, but it still shares many limitations common to modern AI models.

Understanding these constraints allows developers and organizations to build more reliable systems while avoiding unrealistic expectations.

When combined with proper verification, prompt design, and monitoring, models like DeepSeek V3 can be powerful tools in AI-driven applications.

Yes. Like all AI models, DeepSeek V3 may occasionally generate incorrect information.

Hallucinations occur when an AI model generates information that appears plausible but is not accurate.

Yes. The model operates within a context window that limits how much text it can process at once.

Yes. Clear prompts usually lead to better and more accurate responses.

AI models do not always have real-time knowledge unless connected to external data systems.

It can be used in production environments but should include verification and monitoring systems.

Yes. AI models may occasionally produce incorrect logical steps.

Developers can structure prompts carefully, validate outputs, and use external data sources.

Yes. Important information should be checked using reliable sources.

Yes. New models are designed to improve reasoning, context handling, and reliability.