Deepseek AI

DeepSeek LLM Context Length and Reasoning Abilities

Share Deepseek AI

Large language models are no longer judged solely by their ability to generate text. Today, their value depends heavily on two core capabilities:

- Context length (how much information they can process)

- Reasoning ability (how well they can think through problems)

The DeepSeek LLM, developed by DeepSeek, is designed to perform strongly in both areas. This combination makes it particularly useful for complex workflows such as research analysis, coding, automation, and decision support.

This guide breaks down how DeepSeek LLM handles context and reasoning, why these capabilities matter, and how they impact real-world use.

What Is Context Length in AI Models?

Context length refers to the maximum amount of text an AI model can process at once.

This includes:

- user input (prompt)

- previous conversation messages

- system instructions

- documents or datasets

All of this must fit within the model’s context window.

Tokens and Context Windows

AI models process text using tokens.

A token can be:

- a word

- part of a word

- punctuation

- symbols

For example:

- “AI models are powerful” → ~5–7 tokens

- A full research paper → thousands of tokens

The context window is measured in tokens, not characters or words.

Why Context Length Matters

Context length directly affects how useful a model is in real scenarios.

A larger context window allows:

- better understanding of long inputs

- improved continuity in conversations

- more accurate analysis of documents

- stronger reasoning across multiple steps

Without sufficient context, even a smart model behaves like someone who forgets half the conversation.

DeepSeek LLM Context Length Capabilities

DeepSeek LLM is designed to support long-context tasks, making it suitable for complex applications.

Key Advantages

- Processes large documents in a single request

- Maintains longer conversation memory

- Handles multi-step prompts effectively

- Supports advanced workflows

This is especially valuable in domains where information is dense and interconnected.

How Context Length Impacts Real-World Use

Let’s move beyond theory and talk about actual use cases.

1. Research and Document Analysis

A long-context model can:

- read entire reports

- summarize key insights

- compare multiple sources

Without sufficient context, the model would only analyze fragments.

2. Coding and Development

Developers often need AI to understand:

- entire files

- system architecture

- dependencies

DeepSeek LLM can process larger chunks of code, improving accuracy in:

- debugging

- refactoring

- documentation

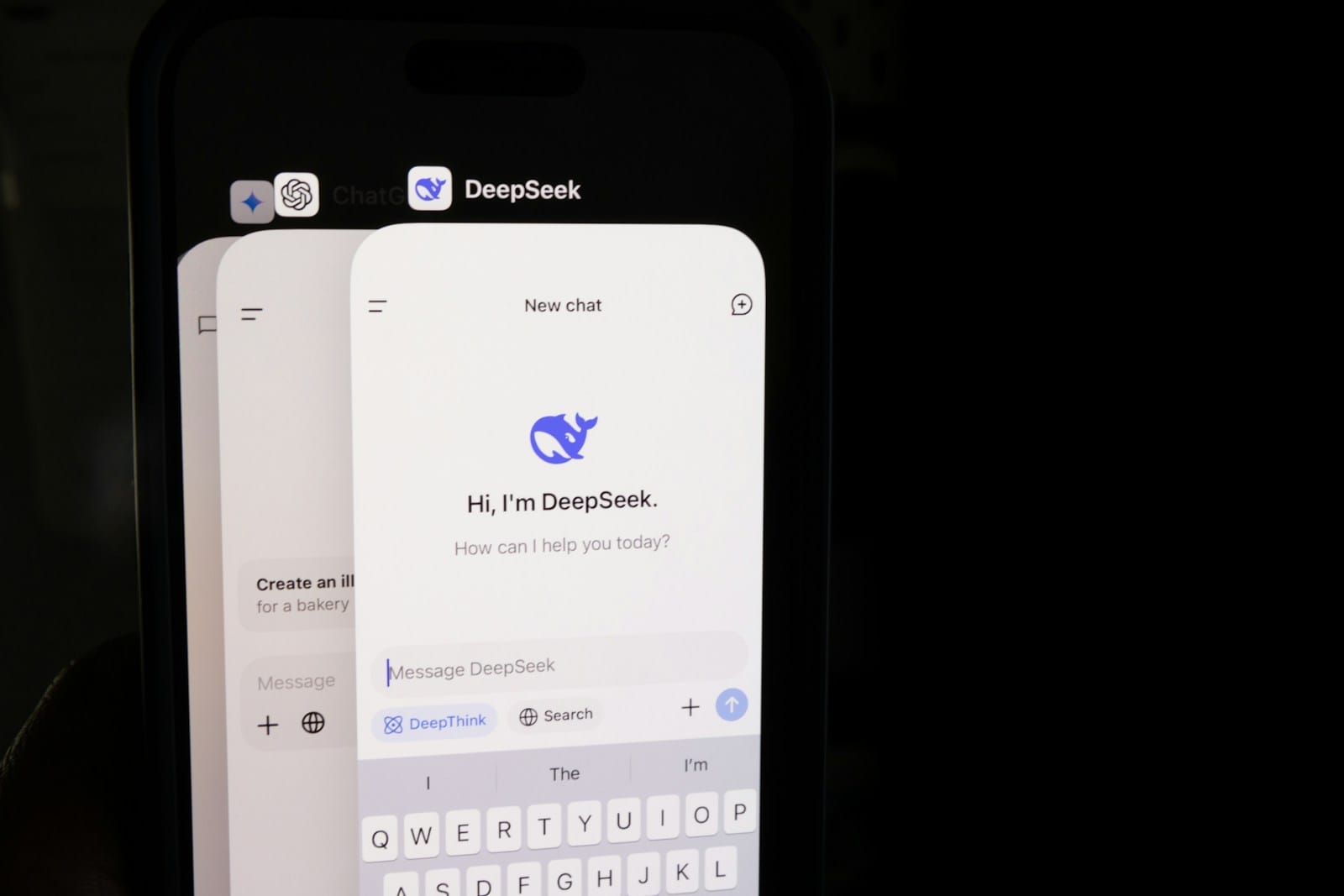

3. Long Conversations

In chat-based applications, context length determines how well the model remembers earlier inputs.

With longer context:

- conversations feel more natural

- follow-ups are more accurate

- less repetition is required

4. AI Agents and Automation

AI agents rely heavily on context to track:

- previous actions

- user goals

- system states

Long context improves decision-making in multi-step workflows.

What Happens When Context Limits Are Exceeded?

Even advanced models have limits.

When context is exceeded:

- older messages are dropped

- instructions may be ignored

- output becomes inconsistent

This is why long workflows sometimes “break” unexpectedly.

Example Problem

User prompt:

- includes 10,000 tokens of context

- model supports 8,000 tokens

Result:

- first 2,000 tokens are discarded

- critical information may be lost

Strategies to Manage Context Efficiently

Smart developers don’t just rely on large context. They manage it.

1. Summarization

Compress earlier conversation into shorter summaries.

2. Chunking

Split large documents into smaller sections.

3. Retrieval Systems (RAG)

Use external storage and fetch relevant data dynamically.

4. Prompt Optimization

Remove unnecessary text and focus on relevant inputs.

Understanding Reasoning in DeepSeek LLM

Now the interesting part. Context alone is useless without reasoning.

Reasoning refers to the model’s ability to:

- analyze information

- follow logical steps

- solve structured problems

- generate explanations

Types of Reasoning Supported

1. Logical Reasoning

- conditional thinking

- if-then scenarios

- decision trees

2. Mathematical Reasoning

- solving equations

- word problems

- structured calculations

3. Analytical Reasoning

- comparing arguments

- identifying patterns

- explaining relationships

4. Multi-Step Reasoning

This is where things get serious.

Example:

“Analyze this dataset, identify trends, and recommend a strategy.”

The model must:

- understand data

- extract patterns

- evaluate outcomes

- produce recommendations

How DeepSeek LLM Combines Context + Reasoning

This is where the model becomes powerful.

Context provides information

Reasoning provides understanding

Together, they enable:

- deep analysis

- structured outputs

- intelligent workflows

Example

Task:

“Analyze a 20-page report and identify risks.”

Requires:

- long context (read document)

- reasoning (identify risks)

Without both, the output is useless.

Real-World Applications

1. Enterprise Knowledge Systems

Companies use AI to:

- analyze internal documents

- generate insights

- support decisions

2. Financial Analysis

AI models can:

- interpret reports

- identify trends

- evaluate risks

3. Healthcare Research

Used for:

- summarizing studies

- comparing treatments

- analyzing outcomes

4. Legal Document Analysis

AI can:

- interpret contracts

- identify clauses

- highlight risks

5. Software Development

Supports:

- debugging

- architecture explanation

- code optimization

Limitations of Context and Reasoning

Let’s not pretend this is magic.

1. Context Still Has Limits

Even large models cannot handle infinite input.

2. Reasoning Can Be Wrong

AI may:

- make logical errors

- skip steps

- produce false conclusions

3. Prompt Sensitivity

Bad prompts = bad results.

4. No True Understanding

The model simulates reasoning, it doesn’t “think” like humans.

Best Practices for Developers

Use Clear Instructions

Specific prompts improve accuracy.

Ask for Step-by-Step Output

Encourages structured reasoning.

Validate Outputs

Always verify critical information.

Combine with External Systems

Use databases and APIs for real-time data.

Future of Context and Reasoning

AI models are evolving toward:

- larger context windows

- better reasoning accuracy

- improved consistency

Expect:

- full-document analysis

- more reliable AI agents

- better decision support systems

Final Thoughts

The combination of context length and reasoning ability defines how useful an AI model is in real-world applications.

The DeepSeek LLM stands out by supporting both:

- long-context processing

- structured reasoning workflows

While limitations still exist, these capabilities make it a strong choice for developers building advanced AI systems.

Meta Title

DeepSeek LLM Context Length and Reasoning Abilities Explained

Meta Description

Explore DeepSeek LLM context length and reasoning abilities. Learn how long-context AI works and how it improves real-world applications.

Excerpt

DeepSeek LLM combines long-context processing with strong reasoning capabilities. This guide explains how it works and where it excels.

Focus Keywords

Primary:

DeepSeek LLM context length

Secondary:

DeepSeek reasoning abilities

DeepSeek context window

DeepSeek AI reasoning

DeepSeek long context model

DeepSeek LLM performance

30 FAQs

1. What is DeepSeek LLM?

A large language model designed for reasoning and long-context tasks.

2. What is context length in AI?

The amount of text the model can process at once.

3. Why is context length important?

It allows better understanding of long inputs.

4. What is a context window?

The maximum token limit a model can handle.

5. Does DeepSeek support long documents?

Yes, within its context limits.

6. What happens when context is exceeded?

Older information is removed.

7. What is reasoning in AI?

The ability to analyze and solve problems.

8. Can DeepSeek perform logical reasoning?

Yes, including multi-step tasks.

9. Is DeepSeek good for coding?

Yes, especially for structured analysis.

10. Can it analyze research papers?

Yes, if within context limits.

11. Does it understand conversations?

It tracks context within a session.

12. Is context the same as memory?

No, context is temporary.

13. Can DeepSeek remember past chats?

Only within the active session.

14. What are tokens?

Units of text used by AI models.

15. How can I improve context usage?

Use summarization and chunking.

16. What is RAG?

Retrieval-Augmented Generation using external data.

17. Can DeepSeek reason step by step?

Yes, if prompted correctly.

18. Are reasoning results always correct?

No, verification is needed.

19. What tasks benefit from long context?

Research, coding, document analysis.

20. Can it handle multi-step workflows?

Yes.

21. Is it suitable for enterprise use?

Yes, with proper validation.

22. Does prompt design matter?

Very much.

23. Can it replace human reasoning?

No.

24. What are its limitations?

Context limits, errors, prompt sensitivity.

25. Can it process real-time data?

Not without external systems.

26. Is it good for automation?

Yes.

27. Does it support APIs?

Yes.

28. Can it analyze datasets?

Yes, in structured prompts.

29. Will context limits increase in future?

Yes, likely.

30. Is DeepSeek worth using?

Depends on use case, but strong for reasoning tasks.